Engaging opening paragraph:

Grid computing is like turning many small sparks into a bright, coordinated flame. By pooling compute power from multiple machines across locations, organizations can tackle large tasks faster than a single server could manage. At ISSGC.org we break down grid computing into friendly, practical terms so developers, system administrators and researchers can grasp the core ideas, the real world benefits, and how to start building scalable distributed systems today.

What is Grid Computing?

Grid computing is a distributed computing approach that connects compute resources from diverse locations to work on a common task. The idea is simple in spirit but powerful in practice: take powerful machines, fabric them into a single virtual computing resource, and manage the work as if it were running on a single system. Grid computing emphasizes sharing, coordination, and collaboration across administrative boundaries to solve problems that demand significant processing power, large data sets, or both.

Key ideas to remember:

– It is resource driven rather than location driven. The focus is on what is available and how to use it, not where it sits.

– It enables parallelism and concurrency across a network of machines.

– It often involves both compute and data resources to support complex workloads.

In practice you will see grid computing used to run scientific simulations, big data analyses, engineering workflows, and multi-institution collaborations. It is especially valuable when you need to use idle computing power, aggregate storage, or coordinate jobs that must run where the data resides.

How Grid Computing Works

Grid computing is built on three essential layers: the hardware resources themselves, the middleware that coordinates those resources, and the applications that submit jobs and consume results. Here is a simplified view of the workflow.

1. Resources become part of the grid

- User nodes (personal workstations or departmental clusters) make their idle cycles available.

- Provider nodes are dedicated or shared servers that contribute compute power or storage.

- A central or distributed control mechanism registers resources, advertises capabilities, and enforces policies.

2. Middleware orchestrates tasks

- The middleware acts as the glue between users and resources. It discovers available resources, negotiates access, and schedules tasks.

- It handles data transfer, job submission, and execution tracking.

- It provides security features so only authorized users can run jobs and access data.

3. Jobs are scheduled and executed

- A user submits a job or workflow describing what needs to run and what data is required.

- The scheduling engine breaks the job into tasks and assigns them to suitable resources based on factors like availability, data locality, and performance goals.

- As tasks complete, results are gathered, validated, and delivered to the user or downstream workflows.

4. Results collected and reused

- Output data is stored in shared or mirrored locations for easy access.

- Provenance and logs are kept to help with reproducibility and debugging.

- The entire process may be repeated or scaled to larger problem sizes.

Benefits of the middleware driven approach include improved resource utilization, faster turnaround times for large tasks, and the ability to scale by adding more nodes to the grid without rewriting applications.

Key Components of a Grid

Understanding the core components helps you design, deploy, and troubleshoot grid systems.

Nodes

- User nodes: workstations or small clusters that submit jobs.

- Provider nodes: high capacity servers or storage systems that execute tasks and store data.

- Controller nodes: orchestrate the grid, manage scheduling queues, and enforce policies.

Grid Middleware

- Resource discovery: finding available compute and storage resources.

- Scheduling and workload management: assigning tasks to the best-fitting resources.

- Data management: moving data where it is needed while maintaining integrity.

- Security and policy enforcement: authentication, authorization, and auditing.

Data and Security Infrastructure

- Shared data repositories or data transfer mechanisms to bring data to the compute resources or bring results back to the user.

- Encryption, authentication, and access controls to protect sensitive information.

- Provenance tracking to ensure reproducibility of results.

Job Scheduling and Workflow Management

- Scheduling algorithms decide which tasks run where and when.

- Workflows define complex sequences of tasks with dependencies.

- Priority rules and quality of service (QoS) considerations help meet deadlines and SLAs.

Types of Grid Computing

Grid computing comes in a few distinct flavors, each suited to different kinds of problems and data flows.

Computational grid computing

- Focuses on large-scale compute tasks such as simulations, render farms, and numerical modeling.

- Emphasizes CPU cycles and parallel execution to reduce wall clock time.

Data grid computing

- Centers on managing and moving large data sets across distributed storage resources.

- Optimizes data locality, replication, and access speed for data-intensive workloads.

Collaborative grid computing

- Enables multiple organizations to share resources within agreed policies.

- Supports cross-institution research projects and community driven efforts.

Modular grid computing

- Builds grids from reusable components and plug-in modules.

- Allows teams to mix and match middleware, schedulers, and data tools to fit specific workflows.

Benefits and Challenges

Grid computing brings many advantages but also requires careful planning and governance.

Benefits

– Efficiency and speed: parallel execution reduces time to results.

– Cost effectiveness: utilize idle resources and avoid over provisioning.

– Flexibility: easily scale by adding more nodes or storage.

– Reliability: redundancy and data replication improve fault tolerance.

– Resource sharing: cross-organization collaboration becomes practical.

Challenges

– Complexity: setting up and maintaining middleware and data management can be intricate.

– Security and trust: cross-domain access requires strong authentication and policy controls.

– Data locality: moving large data sets can be expensive in bandwidth and time.

– Scheduling overhead: poor algorithms can waste cycles and reduce performance.

Real World Use Cases

Grid computing shines in tasks that are naturally parallel and data hungry. Here are representative scenarios.

- Scientific research: climate modeling, molecular dynamics, and astrophysics simulations.

- Financial services: large scale risk simulations, portfolio optimization, and stress testing.

- Engineering: computational fluid dynamics and structural analysis at scale.

- Big data analytics: large ensemble analyses, genomics, and sensor networks.

- Education and citizen science: distributed data processing across university clusters.

Grid versus Cloud Computing

A practical question is how grid computing compares to cloud computing. They share a common goal of delivering scalable resources, but they approach it differently.

- Control and policy: grid often emphasizes collective governance across organizations, while cloud prioritizes centralized control by a single provider.

- Data locality: grid workflows can be designed to run data close to where it resides, reducing transfer costs; cloud may rely more on centralized storage and network bandwidth.

- Resource heterogeneity: grids deliberately integrate diverse hardware; clouds typically present uniform interfaces across homogeneous infrastructure.

- Cost models: grids can leverage idle resources and shared infrastructure; clouds follow pay-as-you-go pricing with dynamic provisioning.

Both paradigms can be complementary. Hybrid architectures let you tie on-premise grids to cloud resources to absorb peak workloads or to access specialized hardware.

Getting Started with Grid Computing

If you are curious about implementing grid computing in your organization, here is a practical starter path.

1) Define the workload

– Identify tasks that are embarrassingly parallel and data heavy.

– Clarify performance goals, deadlines, and data governance requirements.

2) Inventory resources

– List available compute nodes, storage capacity, and network bandwidth.

– Assess reliability, maintenance windows, and security policies.

3) Choose middleware and tooling

– Evaluate workload management systems and grid middleware options.

– Look for compatibility with your operating systems and data formats.

4) Plan data management

– Decide how data will be stored, replicated, and moved.

– Implement data integrity checks and provenance logging.

5) Implement security foundations

– Use strong authentication, authorization, and auditing.

– Encrypt sensitive data in transit and at rest as appropriate.

6) Pilot and scale

– Start with a small, well defined workload.

– Monitor performance and iterate on scheduling strategies before expanding.

Middleware Tools and Components You Might Encounter

A strong grid uses mature middleware to coordinate tasks, data, and security. Some common categories and examples include:

- Job scheduling and workload management

- Systems that accept jobs, split them into tasks, and assign them to appropriate resources.

-

Features to look for: SLA awareness, backfilling, and dependency management.

-

Resource discovery and monitoring

-

Tools to discover available resources, track utilization, and detect failures.

-

Data management and transfer

-

Mechanisms for moving input data to compute nodes and collecting results back.

-

Security and identity management

- PKI based authentication, role based access control, and policy enforcement.

Examples you may encounter in grid environments

– Globus and Globus Toolkit style components for data movement and authentication.

– UNICORE style middleware for unified access to heterogeneous resources.

– GridWay or other metaschedulers that provide job submission and advanced scheduling policies.

– Workflow orchestration layers enabling complex multi step pipelines.

Tip for practitioners: pick middleware that aligns with your existing infrastructure, supports open standards, and has an active user community. This makes troubleshooting easier and accelerates onboarding for your team.

Performance Visualization and Monitoring

A grid that operates at scale benefits from clear visibility into how resources are performing. Key metrics to track include:

- Utilization: how effectively CPU, memory, and storage are used across the grid.

- Throughput: tasks completed per time unit and data processed per second.

- Job turnaround time: time from submission to result delivery.

- Data transfer performance: latency and bandwidth for moving large data sets.

- Failure rates and retry counts: how often tasks fail and how quickly they recover.

Common approaches and tools

– Real time dashboards that show resource availability and queue lengths.

– Historical plots to identify trends and plan capacity.

– Alerting on SLA violations or resource saturation.

Performance visualization not only helps operators keep the grid healthy, it also informs decisions about where to add capacity or optimize scheduling algorithms.

Security and Compliance in Grid Computing

Security is essential for grid environments because resources and data may span multiple administrative domains. Key focus areas include:

- Authentication: strong identity verification, often using digital certificates or multi factor methods.

- Authorization: ensuring users can only access resources and data they are allowed to use.

- Data integrity: checksums and verification to prevent corruption during transfers.

- Data privacy: encryption for sensitive data both in transit and at rest.

- Auditability: logs and provenance to track who did what and when.

Best practices

– Use a centralized but flexible policy framework to govern access control.

– Encrypt critical data when it moves between nodes and when stored in transit repositories.

– Regularly review access rights and rotate credentials.

– Implement fault tolerance and secure recovery procedures.

Big Data Grids and AI Workloads

Grid computing is a natural partner for big data workloads and AI driven tasks. Large scale experiments in AI often require performing the same training or inference across many configurations, datasets, or hyperparameters. Grid architectures can:

- Distribute data partitions and model training tasks to many nodes.

- Provide the bandwidth needed to move large datasets to compute resources.

- Enable reproducibility by capturing workflows and results across diverse environments.

- Support hybrid workflows that combine traditional HPC tasks with data analytics pipelines.

Case Study: A Collaborative Scientific Grid

Imagine a consortium of universities running climate simulations. Each site contributes compute cycles and stores simulation results locally. A central grid middleware coordinates the submission of multiple ensemble runs, schedules tasks where data resides, and aggregates results for analysis. Researchers gain faster turnaround times without mandating a single centralized datacenter. This is the essence of grid computing in action: cooperation that unlocks bigger insights.

Common Pitfalls and How to Avoid Them

- Underestimating data movement costs: plan data locality and replication strategies early.

- Overly complex workflows: start simple and gradually introduce dependencies.

- Rigid scheduling policies: implement adaptive policies that respond to resource availability.

- Insufficient security planning: invest in authentication and authorization from day one.

The ISSGC.org Perspective

As a resource focused on grid computing basics, ISSGC.org emphasizes practical, actionable guidance. Expect content that covers:

– Job scheduling algorithms and how they impact throughput and latency.

– Middleware issues and best practices for choosing and deploying tools.

– Real world examples of big data grids and scalable distributed systems.

– Clear explanations of core concepts like nodes, middleware, and grid architecture.

– Steps you can follow to build, monitor and optimize grid environments.

Frequently Asked Questions

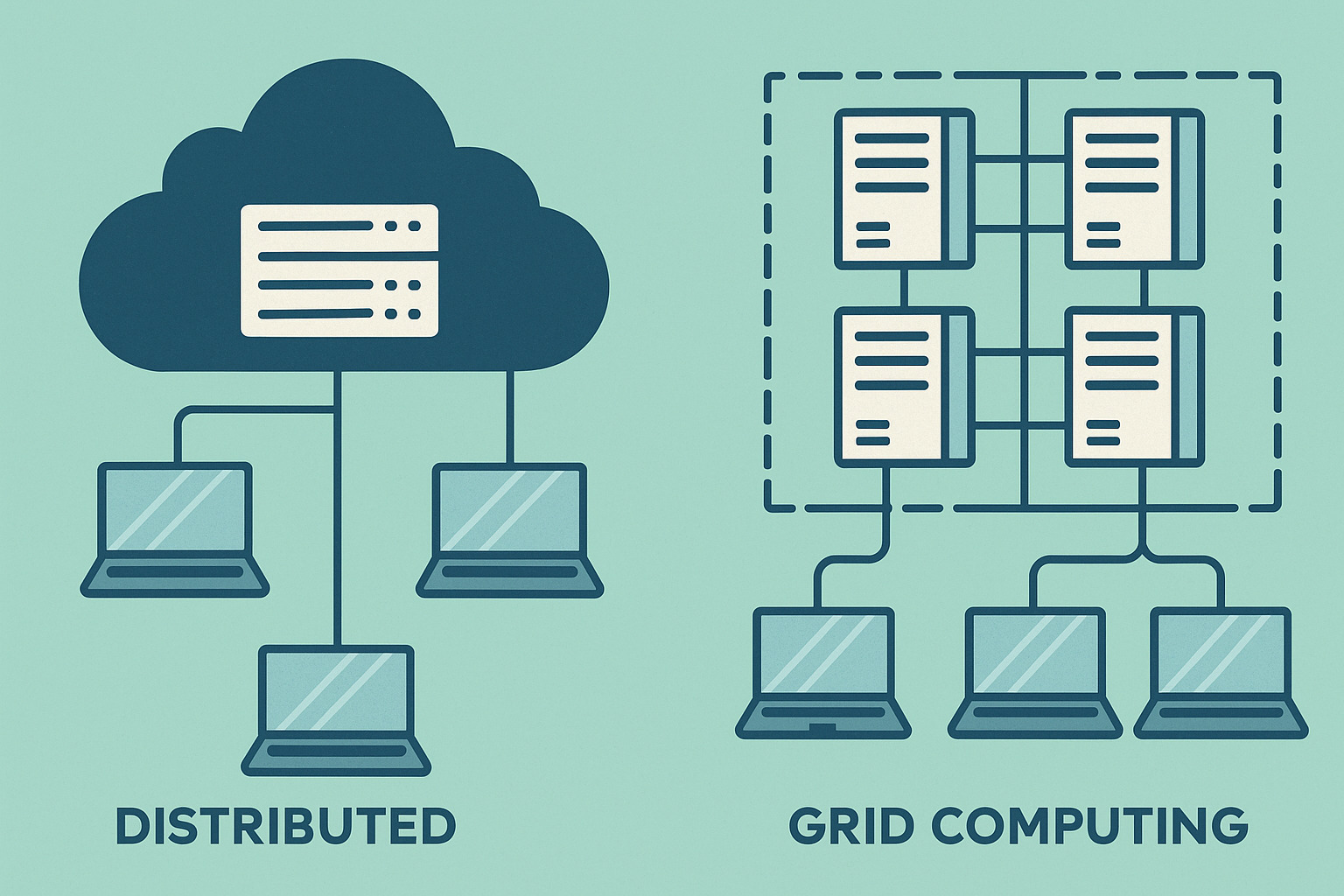

Q: What is the difference between grid computing and distributed computing?

A: Grid computing is a form of distributed computing that emphasizes coordinating heterogeneous resources across multiple administrative domains to work on a common task. Distributed computing broadly includes any system where components located on networked computers communicate and coordinate to perform tasks.

Q: Do I need to run grid computing on-premises to gain benefits?

A: Not necessarily. Many grids use a mix of on prem and cloud resources. Hybrid approaches can provide flexibility and scale while leveraging existing investments.

Q: What kind of workloads are best suited for grid computing?

A: Workloads that are embarrassingly parallel, data intensive, or require access to distributed datasets and compute resources perform well on grids. Examples include scientific simulations, large scale data analysis, and multi site batch processing.

Final Thoughts

Grid computing unlocks the combined power of multiple machines to solve problems that would be impractical for a single system. It requires thoughtful design, solid middleware, and careful governance to deliver reliable, secure, and scalable performance. If you are exploring grid computing for research, industry, or education, start with a small pilot, pick compatible tools, and build a roadmap that emphasizes data locality, scheduling efficiency, and robust security.

This guide aligns with the ISSGC.org mission to educate on grid computing basics, applications, cybersecurity, and tools. By focusing on practical concepts like job scheduling algorithms, middleware choices, big data grids, and performance visualization, you can move from curiosity to a capable grid computing implementation that scales with your needs.